- Overview

- Definitions

- Requirements

- Design Highlights

- Functional Specification

- Logical Specification

- Conformance

- CMDLD-addb

- Unit Tests

- Analysis

- References

Overview

This document explains the detailed level design for generic part of the copy machine module.

Definitions

Please refer to "Definitions" section in "HLD of copy machine and agents" in References

Requirements

The requirements below are grouped by various milestones.

Copy machine Requirements

- r.cm.generic.invoke Copy machine generic routines should be invoked from copy machine service specific code.

- r.cm.generic.specific Copy machine generic routines should accordingly invoke copy machine specific operations.

- r.cm.synchronise Copy machine generic should provide synchronised access to members of cm.

- r.cm.resource.manage Copy machine specific resources should be managed by copy machine specific implementation, e.g. buffer pool, etc.

- r.cm.failure Copy machine should handle various types of failures.

Design Highlights

- Copy machine is implemented as motr state machine.

- All the registered types of copy machines can be initialised using various interfaces and also from motr setup.

- Once started, each copy machine type is registered with the request handler as a service.

- A copy machine service can be started using "motr setup" utility or separately.

- Once started copy machine remains idle until further event happens.

- Copy machine copy packets are implemented as foms, and are started by copy machine using request handler.

- Copy machine type specific event triggers copy machine operation, (e.g. TRIGGER FOP for SNS Repair). This allocates copy machine specific resources and creates copy packets.

The complete data restructuring process of copy machine follows non-blocking processing model of Motr design.

Copy machine maintains the list of aggregation groups being processed and implements a sliding window over this list to keep track of restructuring process and manage resources efficiently.

Logical Specification

Please refer to "Logical Specification" section in "HLD of copy machine and agents" in References

- Copy Machine State diagram

- Copy machine setup

- Copy machine prepare

- Copy machine ready

- Copy machine operation start

- Copy machine sliding window

- Copy machine sliding window persistence

- Copy machine stop

- Copy machine finalisation

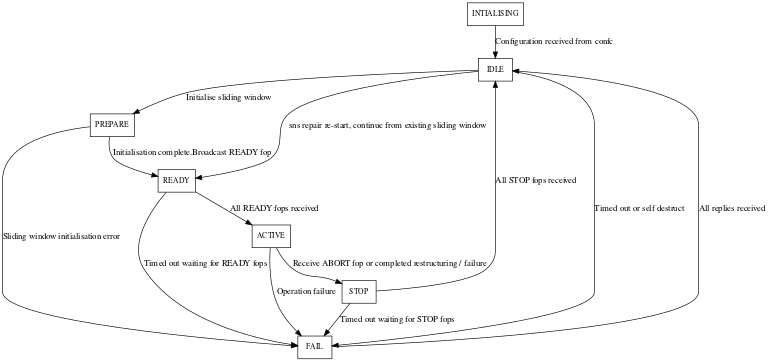

Copy Machine State diagram

Copy machine setup

After copy machine is successfully initialised (m0_cm_init()), it is configured as part of the copy machine service startup by invoking m0_cm_setup(). This performs copy machine specific setup by invoking m0_cm_ops::cmo_setup(). Once successfully completed the copy machine is transitioned into M0_CMS_IDLE state. In case of setup failure, copy machine is transitioned to M0_CMS_FAIL state, this also fails copy machine service startup, and thus copy machine is finalised during copy machine service finalisation.

Copy machine prepare

Initialise local sliding window and sliding window persistent store. Persist sliding window after initialisation and proceed to READY phase.

Copy machine ready

In case of multiple nodes, every copy machine replica allocates an instance of struct m0_cm_proxy representing a particular remote replica and establishes rpc connection and session with the same. See Copy machine proxy for more details. After successfully establishing the rpc connections, copy machine specific m0_cm_ops::cmo_ready() operation is invoked to further setup the specific copy machine data structures. After creating proxies representing the remote replicas, for each remote replica the READY FOPs are allocated and initialised with the calculated local sliding window. The copy machine then broadcasts these READY FOPs to every remote replica using the rpc connection in the corresponding m0_cm_proxy. A READY FOP is a one-way fop and thus do not have a reply associated with it. Once every replica receives READY FOPs from all the corresponding remote replicas, the copy machine proceeds to the START phase.

- See also

- struct m0_cm_ready

Copy machine operation start

After copy machine service is successfully started, it is ready to perform its respective tasks (e.g. SNS Repair). On receiving a trigger event (i.e failure in case of sns repair) copy machine transitions into M0_CMS_ACTIVE state once copy machine specific startup tasks are complete (m0_cm_start()). In case of copy machine startup failure, copy machine transitions into M0_CMS_FAIL state, once failure is handled, copy machine transitions back into M0_CMS_IDLE state and waits for further events.

Copy packet pump

Copy machine implements a special FOM type, viz. copy packet pump FOM to create copy packets (

- See also

- Copy Packet DLD). Copy packet pump FOM creates copy packets until resources permit and goes to sleep if no more packets can be created. Using non-blocking FOM infrastructure to create copy packets enables copy machine to handle blocking operations performed while acquiring various resources efficiently. Copy packet pump FOM is created when the copy machine operation starts and is woken up (iff it was IDLE) as the required resources become available (e.g. when a copy packet is finalised and its corresponding buffer is released to copy machine specific buffer pool).

- struct m0_cm_cp_pump

Copy machine activation

After creating initial number of copy packets, copy machine broadcasts READY FOPs with its corresponding sliding window information to all its replicas in the pool. Every copy machine replica, after receiving READY FOPs from all its replicas in the pool, transitions into M0_CMS_ACTIVE state.

Copy machine sliding window

Generic copy machine infrastructure provides data structures and interfaces which are used to implement sliding window. Copy machine sliding window is based on aggregation group identifiers. Copy machine maintains two lists of aggregation groups, i) aggregation groups having only outgoing copy packets, viz. m0_cm:: cm_aggr_grps_out. ii) aggregation groups having only incoming copy packets, viz. m0_cm:: cm_aggr_grps_in.

- Note

- Both the lists are insertion sorted, in ascending order. Copy machine sliding window is mainly implemented on m0_cm::cm_aggr_grps_in list, i.e. for aggregation groups having incoming copy packets. The idea is that every sender copy machine replica should send copy packet iff the receiving replica is able to receive it. If the receiver is able to receive the copy packet for a particular aggregation group, implies that the corresponding aggregation group is already created, initialised and is present in receiver's m0_cm::cm_aggr_grps_in list. Generic copy machine infrastructure provides interfaces in-order to query and update the sliding window, viz. m0_cm_ag_hi(), m0_cm_ag_lo(), m0_cm_sw_update() and m0_cm_ag_advance(). In addition to generic interfaces a copy machine specific operation m0_cm::cmo_ag_next() is implemented by the specific types of copy machine in-order to calculate the next aggregation group identifier to be processed. This may involve various parameters e.g. memory, network bandwidth, cpu, etc. Sliding window is typically initialised during copy machine startup i.e. M0_CMS_READY phase and updated during finalisation of a completed aggregation group (i.e. aggregation group for which all the copy packets are processed). Periodically, updated sliding window is communicated to remote replica. m0_cm_proxy_sw_update_ast_post() m0_cm_proxy_remote_update()

Updating the local sliding window and saving it to persistent store is implemented through sliding window update FOM. This helps in performing various tasks asynchronously, viz:- updating the local sliding window and saving it to persistent store. See Sliding window update fom.

- See also

- struct m0_cm_sw_update

- m0_cm_sw_update_start()

Copy machine sliding window persistence

window persistence Copy machine sliding window is an in-memory data structure to keep track of progress of some operations. When some failure happens, e.g. software or node crash, this in-memory sliding window information is lost. Copy machine has no clue how to resume the operations at the point of failure. To solve this problem, copy machine stores some information about the completed operations onto persistent storage transactionally. So when node and/or copy machine restarts after failure, it reads from persistent storage and resumes its operations.

The following information is to be stored on persistent storage, i) copy machine id. It is struct m0_cm::cm_id. ii) last completed aggregation group id.

This information is stored in BE. It is also inserted into BE dictionary, with the key "CM ${ID}". Copy machine can find a pointer to this information from BE dictionary with proper key.

The following interfaces are provided to manage this information,

- m0_cm_sw_store_init() Init data on persistent storage.

- m0_cm_sw_store_load() Load data from persistent storage.

- m0_cm_sw_store_update() Update data to the last completed AG.

These interfaces will be used in various copy machine operations to manage the persistent information. For example, m0_cm_sw_store_load() will be used in copy machine start routine to check if a previous unfinished operation is in-progress. m0_cm_sw_store_update() will be called when sliding window advances. m0_cm_sw_store_complete() is called when a copy machine operation completes. In this case, the stored AG id will be deleted from storage, to indicate that the operation has already completed successfully. When node failure happens at this time, and then restarts again, it loads from storage, and -ENOENT indicates no pending copy machine operation is progress.

The call sequence of interface and sliding window update FOM execution is as below:

|

|

V

------------------------------------

| m0_cm_sw_store_load() |

Read sliding window from persistent

store to continue from any previously

pending repair operation.

Also start sliding update FOM.

------------------------------------

/ \

/ \

ret == 0 / \ ret == -ENOENT

A restart from failure / \ A fresh new operation

A valid sw is returned/ \ No sw info on storage.

/ \

V V

----------------------------- ----------------------------

| setup sliding window with | | m0_cm_sw_store_init(): |

| the returned sw. | | Allocate a persistent sw |

| CM operation will start | | and init it to zero. CM |

| from this sw. | | starts from scratch |

----------------------------- ----------------------------

\ /

\ /

\ /

\ /

\ /

V SWU_STORE V

--------------------------- operation completed

-------> | m0_cm_sw_store_update() |----------------------->

| --------------------------- |

| | |

| | |

| | |

<--------------------V |

operation continue |

SWU_COMPLETE V

----------------------------

|m0_cm_sw_store_complete():|

|delete sw info from |

|persistent storage. |

|m0_cm_sw_store_load() |

|returns -ENOENT after this|

|call. |

----------------------------Copy machine stop

Once operation completes successfully, copy machine performs required tasks, (e.g. updating layouts, etc.) by invoking m0_cm_stop(), this transitions copy machine back to M0_CMS_IDLE state. Copy machine invokes m0_cm_stop() also in case of operational failure to broadcast STOP FOPs to its other replicas in the pool, indicating failure. This is handled specific to the copy machine type.

Copy machine finalisation

As copy machine is implemented as a m0_reqh_service, the copy machine finalisation path is m0_reqh_service_stop()->rso_stop()->m0_cm_fini(). Now, before invoking m0_reqh_service_stop(), m0_reqh_shutdown_wait() is called, this returns when all the FOMs in the given reqh are finalised. Although there is a possibilty that the copy machine operation is in-progress while the reqh is being shutdown, this situation is taken care by m0_reqh_shutdown() mechanism as mentioned above. Thus the copy machine pump FOM (m0_cm::cm_cp_pump) is created when copy machine operation starts and destroyed when copy machine operation stops, until then it is alive within the reqh. Thus using m0_reqh_shutdown_wait() mechanism we are sure that copy machine is IDLE and operation is completed before the m0_cm_fini() is invoked.

- Note

- Presently services are stopped only during reqh shutdown.

Threading and Concurrency Model

- Copy machine is implemented as a state machine, and thus do not have its own thread. It runs in the context of reqh threads.

- Copy machine starts as a service and is registered with the request handler.

- The cmtype_mutex is used to serialise the operation on cmtypes_list.

- Access to the members of struct m0_cm is serialised using the m0_cm::m0_sm_group::s_mutex.

Conformance

This section briefly describes interfaces and structures conforming to above mentioned copy machine requirements.

- i.cm.generic.invoke Copy machine generic routines are invoked from copy machine specific code.

- i.cm.generic.specific Copy machine generic routines accordingly invoke copy machine specific operations.

- i.cm.synchronise Copy machine provides synchronised access to its members using m0_cm::cm_sm_group::s_mutex.

- i.cm.failure Copy machine handles various types of failures through m0_cm_fail() interface.

- i.cm.resource.manage Copy machine specific resources are managed by copy machine specific implementation, e.g. buffer pool, etc.

Unit Tests

CM SETUP Unit Tests

- Start copy machine and SNS cm service. Check all the states of copy machine such that they align to the state diagram.

- Stop copy machine and check cleanup.

System Tests

NA

Analysis

NA

References

Following are the references to the documents from which the design is derived. For documentation links, please refer to this file : doc/motr-design-doc-list.rst

- Copy Machine redesign

- HLD of copy machine and agents

- HLD of SNS Repair